Table of Contents

- Executive Summary

2. Sources of Bias in a Machine Learning Task

3. Best Practices in Debiasing Machine Learning

4. Conclusion

5. About Lexalytics

1. Executive Summary

- Social biases exist both in your data, and in the background data that feeds modern NLP algorithms

- Preventing biased decisions is an ongoing process

- You need to measure how you’re doing and work with stakeholders to address cases where it shows up

- You should choose explainable models wherever possible to allow auditing of decisions

- Unusual individuals will always be difficult for algorithms, so you might need a side, human process for them

- There are a lot of approaches that help (anonymization, training other models to root out bias) but no silver bullets

2. Sources of Bias in a Machine Learning Task

Background Bias

Cutting-edge, natural language processing (NLP) research frequently uses language models. These consume web-scale quantities of text to give the NLP systems background knowledge of how language works. As a benefit, the systems start out with a great deal of understanding, and a small amount of training data can produce excellent results. A cost, though, is that social biases leak in through the pre-training corpus. One example is a tendency to associate European names with positive sentiment and African names with negative sentiment.1

Perceptive Bias

Many machine learning (ML) training tasks seek to replicate human judgments, and those judgments may be based on existing conscious or unconscious biases. In a project at Lexalytics, we analyzed the sentiment phrases from employee reviews at a particular company and found the most gendered examples. Male employees were praised for being “proactive,” and frequently received strong compliments like “significant” and “successful.”

Female employees were praised for “efficiency,” “patience,” “compliance” and “positive attitudes.” Another example was a study that found a strong tendency for white athletes to be described as hardworking and intelligent, and black athletes to be described as physically powerful and athletic.2 Any training data coming from human judgment is very likely to contain existing social biases.

1. https://www.groundai.com/project/biasedembeddings-from-wild-data-measuringunderstanding-and-removing/, 2.https://runrepeat.com/racial-bias-study-soccer

Outcome Bias

Even data points not obviously derived from human judgment may carry the signs of existing social prejudices. A loan default is a factual event that did or did not happen. However, the event may still be rooted in uneven opportunities. Black and Hispanic individuals suffered more job losses during the recent downturn and have been much slower to receive those jobs again.3 Medical costs are another frequent source of loan defaults, but health care quality has been shown to vary by race, even after accounting for obvious factors like poverty.4 It is therefore important to understand that there is no clear divide between “factual” vs. “biased” datasets: Social biases can affect any measurable aspect of an individual’s life.

Availability Bias

Machine Learning performs best with clear, frequently repeated patterns. diosyncratic individuals are more likely to be overlooked by such systems. For example, women often have slightly more complicated work histories than men because of time taken off for childbirth. Another example is that a company hiring primarily from the United States may have a degree of understanding about university quality within the U.S., but fail to give credit to attendees of prestigious foreign universities from a lack of data. Differing names for various levels of education could also lead an algorithm to confusion over educational experience.

3. https://projects.propublica.org/coronavirus-unemployment/, 4. https://healthblog.uofmhealth.org/lifestyle/health-inequality-actually-a-black-and-white-issue-research-says

3. Best Practice in Debiasing Machine Learning

First and foremost: Humans and algorithms are both fallible. No matter how much effort is put in, machine learning should be viewed with some suspicion. But, any human process also deserves that suspicion. Debiasing should be looked at as an ongoing commitment to excellence, not a single step. This means actively looking for signs of bias, building in review processes for outlier cases, and staying up to date with advancements in the machine learning field. In the current state of the art, hybrid systems that use both human judgment and artificial intelligence tend to outperform either on their own.

Algorithms can bring rigor and repeatability to a process, and are in many ways a “cleaner” system to search and remove bias within. But humans bring greater context, awareness, research ability and understanding. They are particularly well suited for edge cases and guiding the overall process.

Anonymization and Direct Calibration

Removing names and gendered pronouns from documents being processed, and excluding clear markers of protected classes is a good first step. However, these signals show up in many places that are impossible to wholly disentangle. For example, in the US, zip code is a strong predictor of race. Or in the earlier discussion, the way female and male employees are discussed in reviews varies even if you remove the name and gendered pronouns. Thus, a model that has a negative bias towards females can predict that a review was about a female and penalize it, even if that information wasn’t directly provided.

Similarly, an approach to dealing with bias in language models is to geometrically remove any he/she distinctions. Research has shown, however, that the bias remains in second-order associations between words; female and male words still cluster, and so can still form undesired signal for the algorithms.5 These approaches are a logical first step, but do not by themselves solve the problem.

5. https://arxiv.org/abs/1903.03862

Linear Models

Deep models and decision trees can more easily hide their biases than linear models, which provide direct weights for each feature under

consideration. From the zip code example, we can see that bias can still slip through innocuous looking features, but directly explainable results

are at least amenable to audit and study.

For some tasks, it may be appropriate to forgo the increased accuracy of more modern methods for the simple explanations of traditional approaches. In other cases, deep learning can be used as a “teacher” algorithm for linear classifiers. We have seen success, for example, in training deep networks for sentiment analysis, using the networks to produce large quantities of training data, and then using a linear algorithm on that training data. This produces a list of phrases with positive or negative polarity that can then be searched by a human for undesirable associations.

Adversarial Learning

Bias can be hidden in innocuous features. Additionally, deep learning models can find subtle patterns within datasets, and are hard to inspect. These lead to risks that a fair-seeming system has hidden prejudices. An obvious approach, then, is to turn these algorithms at the problem: Use deep learning to find the bias within a deep learning system. Broadly, the general approach is known as “adversarial learning.”

In a normal learning framework, we “reward” the network for correct predictions. In an adversarial system we provide a second feedback mechanism: The network is penalized if an undesired prediction can be made. For example, we might have the promotion history of all individuals in a company, along with information about the individuals. We would train a network to predict the career trajectories of each individual. The level of the network that feeds that prediction would also be used to train a model to predict gender of an individual.

The network would be updated until it was predicting career trajectories as well as it can, conditioned that the secondary network is unable to tell which gender each individual is. This process can be used at training time, or to remove bias from language models. However, the process is not an exact science and isn’t guaranteed to remove all bias. Still, it’s an important tool in the tool belt.

Data Cleaning

In many ways, the best way to reduce bias in our models is to reduce bias in our businesses. The datasets used in language models are too large for manual inspection, but efforts to clean them are worthwhile. More directly, any model trained to predict a human action is improved if the human makes less-biased decisions and observations. Employee training, and review of historic data, is thus a great way to improve models while also indirectly addressing other workplace issues.

Audits and KPI

Machine learning is complicated, and models exist within larger human processes with their own complexities and challenges. Each piece in a business process may look acceptable, and still display bias in aggregate. Thus, an important step in removing bias is to monitor its surface forms. Are men being promoted more quickly than women? And is this trending in the wrong or right direction over time? Given legitimate differences, e.g. in time away from the workforce, some discrepancy may be acceptable or appropriate. But ideally, such discrepancies are explainable and discussed.

Additionally, human exploration is one of the best ways of finding issues in real systems. For example, studies that sent identical resumes with “White” and “Black” names were important in demonstrating hiring biases in corporate America.6 Allowing people to play with the inputs, or search through aggregate statistics, is a valuable way to discover unknown issues in a system.

For particularly important tasks “bias bounties” may even be a useful approach, mirroring the “bug bounty” systems used by large software

companies: People who find clear flaws in the implementations are given cash rewards to encourage the search for them.

4. Conclusion

Clean and plentiful data, appropriate algorithms and human oversight are critical for successful artificial intelligence (AI) applications. Especially when data is insufficient, it may not be appropriate to apply these techniques to every problem. However, it’s also important to be cognizant of the biases in human processes; eschewing AI does not make the issues go away.

Bias can be reduced in business processes via the smart application of machine learning, careful human thought and effort, open discussions about goals and approaches, and a commitment to debiasing as an ongoing process. Although the lack of a silver bullet is unfortunate, the benefit of these efforts are clear.

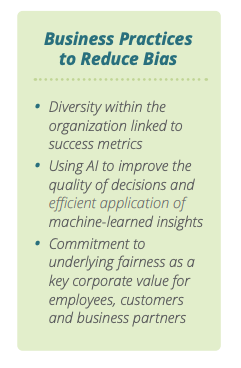

First, diversity in an organization is continually linked to a variety of success metrics.7

Second, AI has shown tremendous potential in improving the quality of business decisions and unlocking new markets and tasks through efficient application of learned insights.

Finally, a commitment to underlying fairness is an important corporate value to many employees, and many corporate customers and partners. In this way, efforts to stay on the cutting edge of technology in the use of AI technologies while maintaining a commitment to avoiding adverse side effects can be particularly beneficial in recruitment and brand awareness efforts.

7. https://www.weforum.org/agenda/2019/04/business-case-for-diversity-in-the-workplace/

About Lexalytics

Lexalytics, an InMoment Company processes billions of words every day, globally, for data analytics companies and enterprise data analyst teams that need to tell powerful stories from text data. The company’s Salience®, Semantria® and Lexalytics Intelligence Platform™ products combine natural language processing with artificial intelligence to transform text in all its forms into usable data. Lexalytics solutions can be deployed on premises, in the cloud or within hybrid cloud infrastructure to reveal context-rich patterns and insights for voice of customer, voice of employee, customer experience management, market research, social listening, news monitoring and other business intelligence programs.

For more information, please visit www.lexalytics.com, email sales@lexalytics.com or call 1-617-249-1049. Follow Lexalytics on Twitter, Facebook, and LinkedIn.